About this knowledge base

This post provides an overview of the content of this knowledge base.

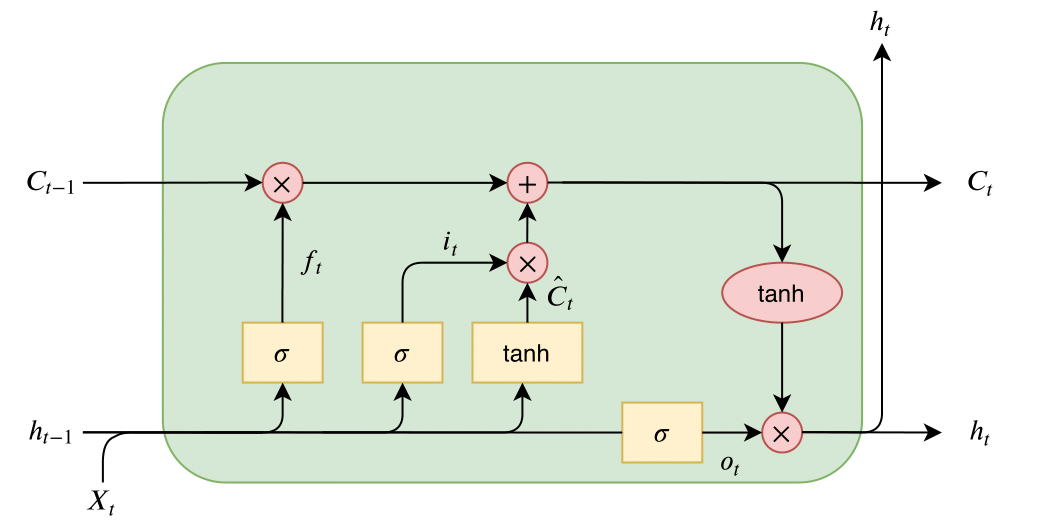

Lecture 3: Covers language models before transformers: RNNs, Seq2Seq, LSTM models

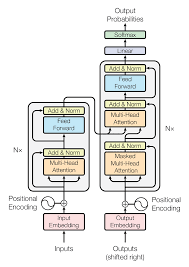

Letcure 4: Covers transformer architecture in general and begins discussion on transformer language models

Letcure 7: Covers the basics of training and optimizing neural networks