The Transformer

Topics

This post covers the fourth lecture in the course: “The Transformer.”

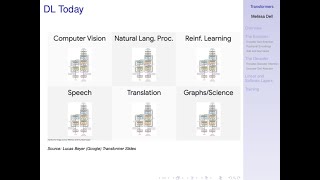

The transformer architecture revolutionized NLP and has since made substantial inroads in most areas of deep learning (vision, audio, reinforcement learning…). This lecture will cover substantial ground that will be foundational to the rest of the course. Please plan to devote sufficient attention (no pun intended) to this material.

Lecture Video

References Cited in Lecture 4: The Transformer and Transformer Language Models

Academic Papers

The Original Transformer

- Vaswani, Ashish, Noam Shazeer, Niki Parmar, Jakob Uszkoreit, Llion Jones, Aidan N. Gomez, Łukasz Kaiser, and Illia Polosukhin. “Attention is all you need.” In Advances in Neural Information Processing Systems, pp. 5998-6008. 2017.

Transformer Language Models

-

Devlin, Jacob, Ming-Wei Chang, Kenton Lee, and Kristina Toutanova. “Bert: Pre-Training of Deep Bidirectional Transformers for Language Understanding.” arXiv preprint arXiv:1810.04805 (2018).

-

Liu, Yinhan, Myle Ott, Naman Goyal, Jingfei Du, Mandar Joshi, Danqi Chen, Omer Levy, Mike Lewis, Luke Zettlemoyer, and Veselin Stoyanov. “Roberta: A robustly optimized bert pretraining approach.” arXiv preprint arXiv:1907.11692 (2019).

-

Lan, Zhenzhong, Mingda Chen, Sebastian Goodman, Kevin Gimpel, Piyush Sharma, and Radu Soricut. “Albert: A lite bert for self-supervised learning of language representations.” arXiv preprint arXiv:1909.11942 (2019).

-

Sanh, Victor, Lysandre Debut, Julien Chaumond, and Thomas Wolf. “DistilBERT, a distilled version of BERT: smaller, faster, cheaper and lighter.” arXiv preprint arXiv:1910.01108 (2019).

-

Brown, Tom B., Benjamin Mann, Nick Ryder, Melanie Subbiah, Jared Kaplan, Prafulla Dhariwal, Arvind Neelakantan et al. “Language models are few-shot learners.” arXiv preprint arXiv:2005.14165 (2020). (https://www.technologyreview.com/2020/09/23/1008729/openai-isgiving-microsoft-exclusive-access-to-its-gpt-3-language-model/); see also: Radford, Alec, Jeffrey Wu, Rewon Child, David Luan, Dario Amodei, and Ilya Sutskever. “Language models are unsupervised multitask learners.” OpenAI blog 1, no. 8 (2019): 9.

-

Tay, Yi, Vinh Q. Tran, Sebastian Ruder, Jai Gupta, Hyung Won Chung, Dara Bahri, Zhen Qin, Simon Baumgartner, Cong Yu, and Donald Metzler. “Charformer: Fast character transformers via gradient-based subword tokenization.” arXiv preprint arXiv:2106.12672 (2021). (Charformer)

-

Nguyen, Dat Quoc, Thanh Vu, and Anh Tuan Nguyen. “BERTweet: A pre-trained language model for English Tweets.” arXiv preprint arXiv:2005.10200 (2020). (BERTweet)

Other Resources

- “The Annotated Transformer” (a guide annotating the paper with PyTorch implementation)

- “The Illustrated Transformer”

- “Transformers-based Encoder-Decoder Models”

- “Glass Box: The Transformer – Attention is All you Need” -The Illustrated BERT, ELMo, and co. (How NLP Cracked Transfer Learning): -The Illustrated GPT-2 (Visualizing Transformer Language Models)

Code Bases

- OPT (Open Pre-trained Transfomers) from FAIR

- Huggingface open source library with large variety of NLP models. See Transformers repo for most applications. Most models referenced above (BERT, BERTweet, RoBERTa, DistilBERT) are implemented and easily accessed through Huggingface APIs.

Image Source: Vaswani et. al. (2017) Attention Is All You Need.