HJDataset : A Large Dataset of Historical Japanese Documents with Complex Layouts

Abstract

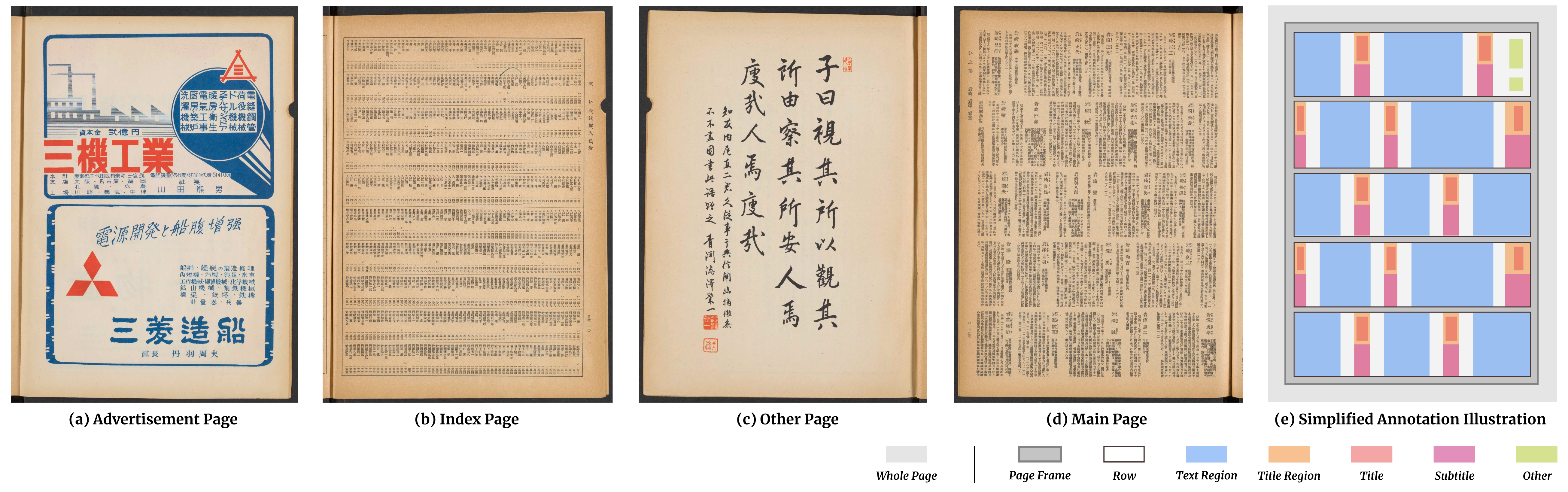

Deep learning-based approaches for automatic document layout analysis and content extraction have the potential to unlock rich information trapped in historical documents on a large scale. One major hurdle is the lack of large datasets for training robust models. In particular, little training data exist for Asian languages. To this end, we present HJDataset, a Large Dataset of Historical Japanese Documents with Complex Layouts. It contains over 250,000 layout element annotations of seven types. In addition to bounding boxes and masks of the content regions, it also includes the hierarchical structures and reading orders for layout elements. The dataset is constructed using a combination of human and machine efforts. A semi-rule based method is developed to extract the layout elements, and the results are checked by human inspectors. The resulting large-scale dataset is used to provide baseline performance analyses for text region detection using state-of-the-art deep learning models. And we demonstrate the usefulness of the dataset on real-world document digitization tasks.

Paper

This paper is accpeted at the CVPR2020 Workshop on Text and Documents in the Deep Learning Era. You can find our preprints Here.

Dataset

Notice: Due to some copyright issues, we could not directly release the images. Please fill out this form to issue a request to download the images. Thank you!

Please check this link to download the dataset. You may also find the following quick notes helpful when using HJDataset.

Starter Code

Please check our github repository.

Pretained Models

We pretrained three models over the HJDataset, namely, Faster RCNN, Mask RCNN, and RetinaNet. The implementation is based on Detectron2. Besides the model weights, we also include the configuration files (config.yml) in the donwload links to improve reproducibility.

| Model Name | Faster R-CNN | Mask R-CNN | RetinaNet |

|---|---|---|---|

| Download Link | faster_rcnn_R_50_FPN_3x | mask_rcnn_R_50_FPN_3x | retinanet_R_50_FPN_3x |

| mAP (%) | 81.991 | 81.343 | 75.223 |

Copyright

- Annotations in the dataset are released under the Apache 2.0 License.